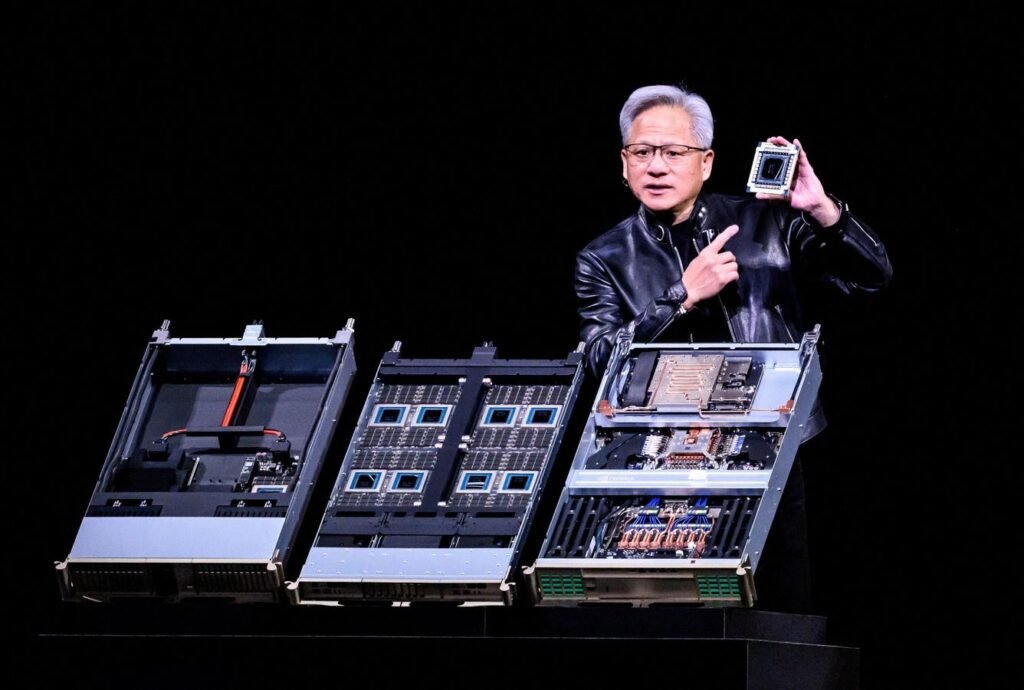

Nvidia CEO Jensen Huang introduces Vera Rubin, a next-generation artificial intelligence data center platform during the keynote address at the company’s annual GTC developer conference in San Jose, California, on March 16, 2026. (Photo by JOSH EDELSON/AFP via Getty Images)

AFP via Getty Images

At Nvidia GTC Conference 2026 in San Jose, Jensen Huang did something unusual even by Silicon Valley standards. He didn’t just outline a road map. Quantify the future of artificial intelligence. He declared“I think the demand for computers has increased by a million times in the last two years.”

Huang now plans at least a trillion dollars in demand for Nvidia’s Blackwell and Vera Rubin systems by 2027, doubling the company’s previous estimate of $500 billion from just a year ago. But in the weeks since that announcement, new details have made one thing clear. The header number is already out of date. This is not a maximum prediction. It’s a moving target.

AI Acceleration is the real story

The most important update isn’t that Nvidia is seeing a trillion dollars in demand. It’s how fast that number changes. At GTC, Huang highlighted that computing demand has been virtually off the charts, describing growth that has increased by orders of magnitude in just a few years. This means that while the $1 trillion demand figure seems huge, it can be upgraded again in a matter of months.

This acceleration is now visible throughout the whole stack. Nvidia is no longer scaling in a predictable semiconductor cycle. It scales alongside the expansion of AI itself.

Market expansion due to AI

When a new technology emerges, such as industrial automation or artificial intelligence, it is difficult to predict the future market size of the technology. The reason is that as technology adoption occurs, usage increases and new types of users also enter the market. This leads to an expansion of the market size itself. A good example of AI-based acceleration is the software development market. While today most of the AI-based vibe coding is done by software engineers, we expect that non-technical users, such as a business analyst, will also start building apps without prior technical knowledge. This means that the total number of users will expand dramatically in the vibe AI coding market compared to the existing software development market. This is a good example of how a breakthrough technology like AI not only increases its own adoption but also expands the size of the market.

The conclusion as a turning point

Nvidia is no longer positioned to train large models. It now explicitly creates for inference at scale, the continuous process of running AI systems in real time. Huang boldly announced in G.T.K“The conclusion tilt has arrived.” He also explained why by saying, “AI must now think….AI must read….. Finally, AI is able to do productive work. Therefore, the turning point of the conclusion has been reached.’

As agent AI systems start to take off, AI workloads become persistent. These systems do not wait for prompts. They operate continuously, producing results, making decisions and executing work flows. This change is already reshaping Nvidia’s entire product roadmap. The conclusion becomes central to Nvidia’s product strategy. The company has introduced new architectures specifically designed to accelerate real-time AI processing. These systems are built to complement GPUs, dramatically improving latency and token performance.

This marks a clear change of position. Nvidia isn’t just standing up for its leadership in education. He tries to possess conclusionthe segment of the AI market that is expected to generate most of the long-term demand.

The Vera Rubin moment

Nvidia’s new Vera Rubin platform is now moving into production scale, with systems expected to launch on cloud infrastructure in the second half of 2026. Bernstein quantified Vera Rubin’s ROI, “The upcoming platform, which will begin shipping in the second half of 2026, can deliver approximately 5x better inference performance and 3.5x stronger training performance than current systems.”

Vera Rubin isn’t just a faster chip. It’s a complete system architecture designed to power what Nvidia calls artificial intelligence factories. These are large-scale, always-on computing environments optimized for heavy workloads. Recent announcements reinforce just how far Nvidia is pushing this model. The company introduced new rack-level systems, new CPUs designed specifically for agent AIand embedded architectures that combine GPUs, networking, and storage into a single platform.

At the same time, Nvidia is tackling one of the biggest bottlenecks in AI infrastructure: data traffic. Newly introduced storage architectures are designed to eliminate constraints around context memory and token performance, improving efficiency for large-scale inference workloads.

The competition is heating up

While Nvidia remains dominant, the latest developments show that the ecosystem is evolving. Alternative inference providers are gaining ground. Obviously, Google is exploring a partnership with Marvell to create chips for AI inference. This is in addition to Google’s native tensor processing units, which they have found success already. Separately, superscalers continue to invest in custom silicon. AI companies themselves are beginning to diversify their computing strategies.

AI demand still exceeds supply

Despite the scale of Nvidia’s increased predictions, one limitation hasn’t changed: AI supply still lags behind demand. The company continues to ramp up production, but the reality is that hyperscale and enterprise customers are still competing for access to compute. This imbalance is not a temporary issue. It is a defining feature of the current AI cycle because, for the first time, computation is not just a resource. It is a limiting factor for the growth of the market size. Artificial intelligence is not a static market. It’s an expanding market, limited by calculations.

Huang’s projection of a trillion dollars it is easy to interpret as ambitious. But it can be revised upwards in practice. The real story isn’t that Nvidia sees a trillion-dollar opportunity. It’s that the industry is scaling faster than even Nvidia expected. In this world, companies that control computers won’t just participate in the next phase of artificial intelligence. They will define it.