The AI world has been abuzz recently with news and rumors that Google’s latest TPU, Ironwood, is powering the Gemini3 model, surpassing OpenAI in many metrics, including intelligence and performance. Now, The Informationas repeated by Bloomberg and CNBC, reports that Alphabet’s Google is preparing to market its TPUs beyond Google Cloud, with Meta Platforms as the main design win. Google is also rumored to be pitching the Ironwood Pod to other hyperscalers and large enterprises as an alternative to Nvidia. As a result, Alphabet is up nearly 50% over the past month, while Nvidia is down more than 7%. But another shoe is about to drop. (Note that this story contains my analyst’s guesswork.)

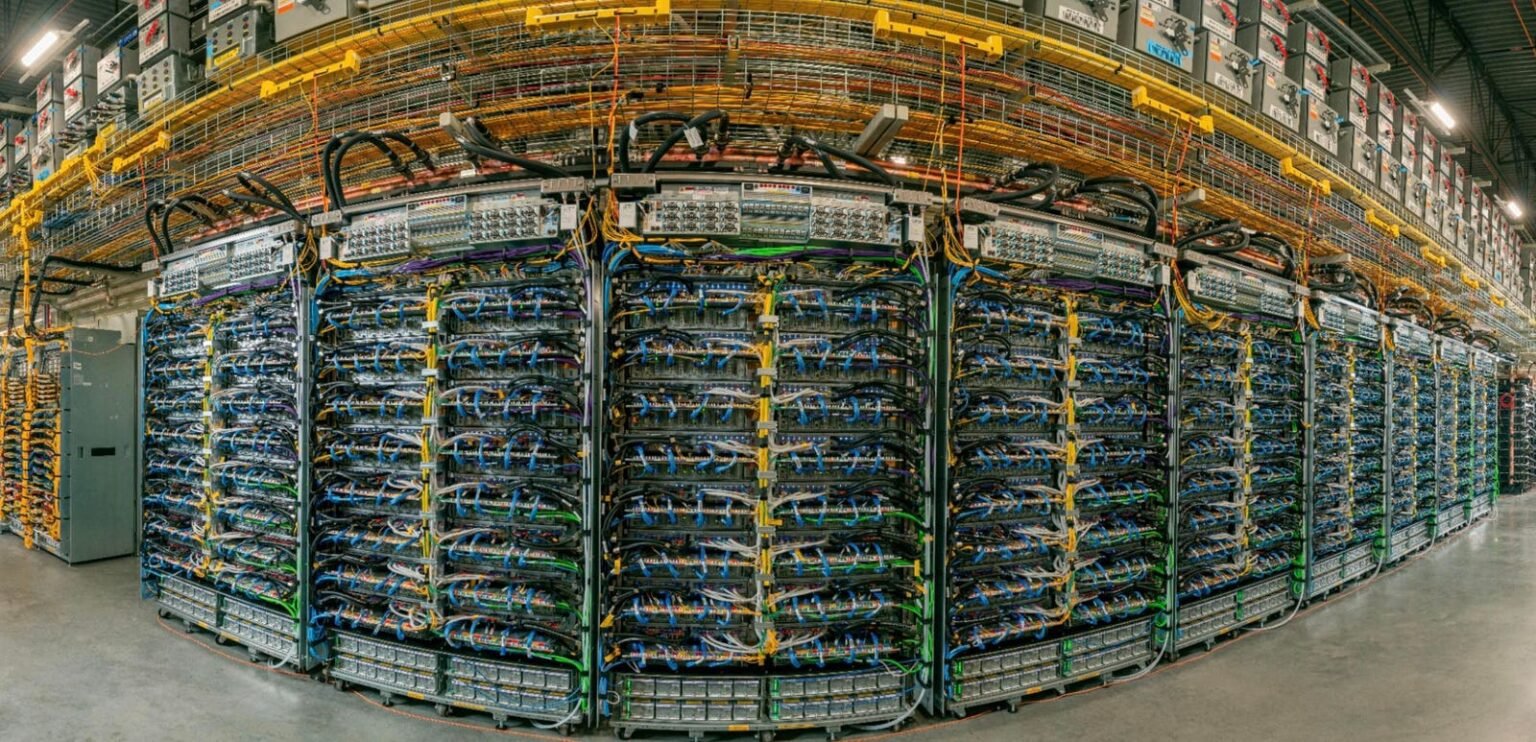

The Ironwood TPU Pod.

My point of view

Historically, TPUs have been limited to Google’s fleet and only exposed as a managed service in the Google Cloud. This obstacle has limited TPUs to Google workloads, primarily. Now, however, Google is rumored to be actively promoting TPU on-prem or colocation within customer premises, including banks, HFT shops and large cloud customers.

Specified at Ironwood, with 9216 TPUs in a Pod connected via fiber optic switching networks, it’s Google’s first TPU specifically designed for the “age of inference.

Internal Google Cloud forecasts cited in press reports suggest that Google management believes wider TPU adoption could cover on the order of 10% of Nvidia’s current data center revenue run rate over time, which would mean tens of billions in future annual TPU revenue could be gained if the strategy pans out.

The other shoe

The current liquid concrete negotiations described in the press are between Google and Meta. There is no public indication that Amazon Web Services (AWS) Amazon.com, Inc. or Microsoft Azure (Microsoft Microsoft Corporation) are close to offering native TPU instances on their own clouds. However, I did see some breadcrumbs on the floor of SuperComputing last week in St. Louis.

Cirrascale, a specialized provider of high-performance cloud services, had the Google Cloud logo at their booth. While the staff was clear that nothing else could be shared without an NDA, I have to wonder if Cirrascale was planning to announce the Google Cloud deal before a minor scheduling hurdle arose. The booth property has already been ordered and delivered, the image below suggests they have a deal. Which begs the question, “Who else?” does it have such a setup and when can the news go live.

Cirrascale showed off the Google Cloud logo at SC25.

The Author

Strategic framework

Meta’s interest is framed alongside other marquee external users. For example, Apple reportedly used large TPUv4/v5p clusters to train Apple Intelligence models via Google Cloud. Comments about the “AI chip wars” argue that multi-cloud AI customers want a second source to Nvidia that is available at meaningful scale. TPU outsourcing and meta-scale reference deployments are seen as Google’s attempt to become that second source, potentially shifting some AI capital away from Nvidia and possibly AMD to Alphabet if Google’s silicon and software stack proves competitive.

I’d like to note that Google currently can’t match the Nvidia AI Ecosystem, nor its pervasive software stack. So, if my hunch is accurate, this should be seen as an inevitable evolution in the AI ecosystem and supply chain caused more by supply/demand imbalances than competitive inadequacy in Nvidia’s GPUs.

Disclosures: This article expresses the views of the author and should not be construed as advice to buy or invest in the companies listed. My company, Cambrian-AI Research, is fortunate to have many semiconductor companies as our clients, including Baya Systems BrainChip, Cadence, Cerebras Systems, D-Matrix, Esperanto, Flex, Groq, IBM, Intel, Micron, NVIDIA, Qualcomm, Graphcore, SImA. dozens of investors. For more information, visit our website at https://cambrian-AI.com.